See if you read all the documentation about SSRS 2008 integrated mod, they pretty much ask you to create the reports in BIDS and go through the publishing steps or simply upload your Reporting services artifacts to the designated document libraries. This is nice and easy, but sucks big time!

First off , no offense , but the majority of those who deploy have absolutely no clue how to use BIDS! Secondly BIDS is not necessarily available on the production servers. Thirdly, there are many scary clients out there who don’t entertain anything except full automation of your development bits including your reports, data models, data source and etc. All this calls for an automated solution that takes care of deploying your reports and all of their dependencies.

Well, we have a problem though! While you can publish report definitions (.rdl) and report models (.smdl) to a SharePoint library, this does NOT apply to data source (.rds) files! That’s exactly what design tools (i.e. BIDS) do under the hood! During publication, design tools create a new .rsds file from the original .rds file and publish that file instead. They literally convert the .rds file to its .rsds counterpart which is pretty contains same content but in different schema! This process very much resembles to what InfoPath publishing wizard does by turning UDC files into UDCX files for browser-enabled forms.

Truth to be told, if you want to fully automate your deployment, you should write your own converter and perform the deployment by utilizing SharePoint feature framework and SSRS proxy endpoint (ReportService2006.asmx). I am not sure how difficult it was for the SSRS team to provide another web method that takes a .rds file and publish it to a SharePoint document library!! The functionality is there and is used by design tools, right? They just didn’t expose it to us!

Okay, I just wrapped up a POC that demonstrate how you can accomplish this. You can download the code here.

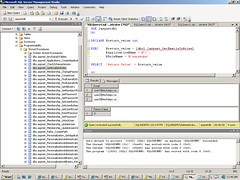

1. Craft up a project and include the shared data source and all of your reports like below:

BTW, I am using WSPBuilder for this project.

2. In feature.xml, put in the following CAML code snippet:

<?xml version="1.0" encoding="utf-8"?>

<Feature Id="9910ff1d-9f2e-4081-a02d-afdf53f9f605"

Title="Top20Products"

Description="This Feature adds the Top 20 Products Report and its datasource"

Version="12.0.0.0"

Hidden="FALSE"

Scope="Web"

DefaultResourceFile="core"

ReceiverAssembly="Top20Products, Version=1.0.0.0, Culture=neutral, PublicKeyToken=a7ad40de4a1b3be7"

ReceiverClass="Top20Products.Top20Products"

xmlns="http://schemas.microsoft.com/sharepoint/">

<ActivationDependencies>

<ActivationDependency FeatureId="C88C4FF1-DBF5-4649-AD9F-C6C426EBCBF5"/>

</ActivationDependencies>

<ElementManifests>

<ElementFile Location="DtSourceNorthwind.rds" />

<ElementFile Location="RsProducts.rdl" />

</ElementManifests>

</Feature>

As you can tell, in the feature manifest, I am referencing the RsProducts.rdl and DtSourceNorthwind.rds files as ElementFile inside ElementManifests node.In this blog post I am assuming that each feature pack contains multiple reports,but share one data source. If you have other reports which don’t share data source, just create more features. Who cares? Too many features?!! Well, you can hide them all and use a hub feature that when gets activated, activates all of the child features; so does the deactivation. No worries!

One more thing about the feature above. Since I am using Data Connection Library and Report Library to host my SSRS artifacts, there is this activation dependency to ensure that enterprise feature is already turned on.

Let’s move on and have a look at the code in the feature receiver:

[CSharp]

public override void FeatureActivated(SPFeatureReceiverProperties properties)

{

SPWeb web = properties.Feature.Parent as SPWeb;

ReportingService2006 rs06 = new ReportingService2006();

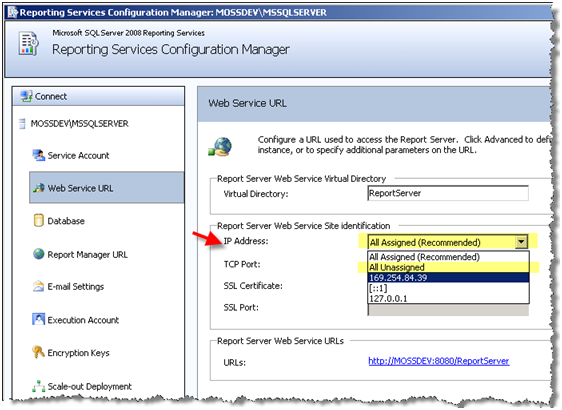

rs06.Url = web.Url + “/_vti_bin/ReportServer/ReportService2006.asmx”;

rs06.Credentials = System.Net.CredentialCache.DefaultCredentials;

…

}

[/CSharp]

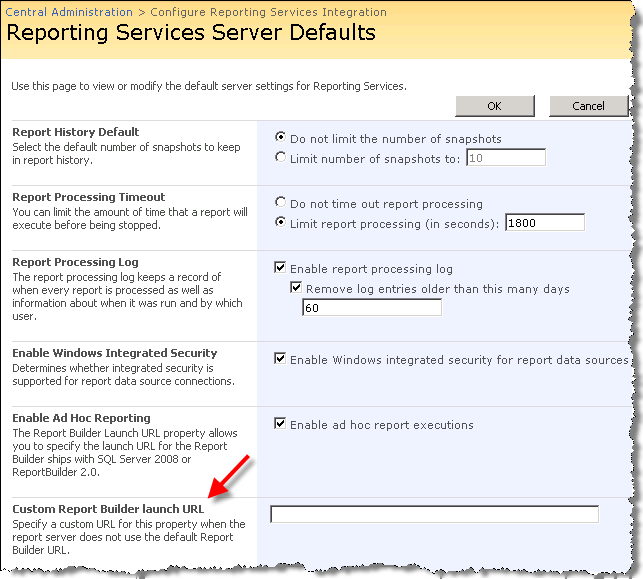

In the FeatureActivated method, I first initialize the SSRS Proxy endpoint which has already been nicely virtualized and context aware by SSRS Add-in for me. Security context under which this Web service call gets executed is determined by how you set up SSRS authenitcation mode in the Central Administration site. Read my post here.

[CSharp]

string featurePath = string.Format(@”{0}\FEATURES\{1}\”, SPUtility.GetGenericSetupPath(“Template”), @”Top20Products”);

string[] reportPaths = Directory.GetFiles(featurePath, “*.rdl”);

string[] dataSourcePath = Directory.GetFiles(featurePath, “*.rds”);

if(dataSourcePath.Length != 1)

throw new Exception(“Oops!Only one shared datasource per feature pack or there is no datasource”);

[/CSharp]

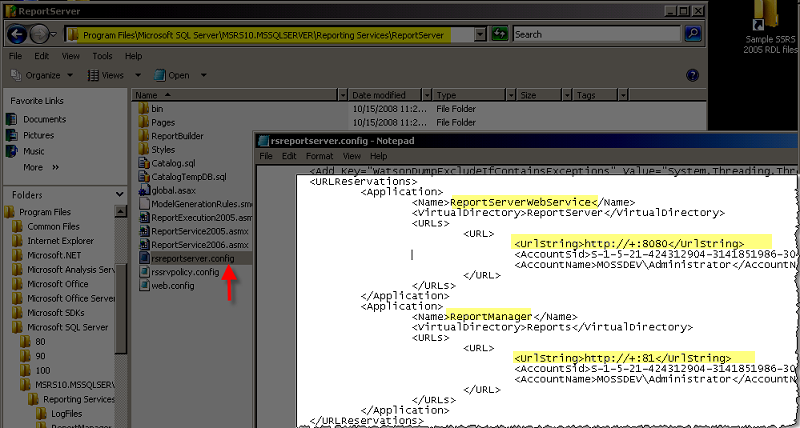

So the plan is I can simply copy reports and their shared data source over to the feature folder so when feature gets activated I can call ReportService2006.asmx to publish reports, create the data source and finally fix up the data source reference in the deployed reports. Since the report and its data source is already deployed to the feature folder , I can simply get a reference to the files on the WFE server.

[CSharp]

XmlNode extensionNode = null;

XmlNode connectStringNode = null;

XmlNode rptDataSourceNameNode = null;

fileName = Path.GetFileName(dataSourcePath[0]).Replace(“rds”, “rsds”);

stream = File.OpenRead(dataSourcePath[0]);

XmlDocument dsDOM = new XmlDocument();

dsDOM.Load(stream);

stream.Close();

rptDataSourceNameNode = dsDOM.SelectSingleNode(“RptDataSource/Name”);

XmlNodeList connectionPropertiesNodes = dsDOM.SelectNodes(“RptDataSource/ConnectionProperties”);

foreach (XmlNode node in connectionPropertiesNodes)

{

extensionNode = node.SelectSingleNode(“Extension”);

connectStringNode = node.SelectSingleNode(“ConnectString”);

}

DataSourceDefinition dsDefinition = new DataSourceDefinition();

DataSource dSource = new DataSource();

dSource.Item = dsDefinition;

dsDefinition.Extension = extensionNode.InnerText;

dsDefinition.ConnectString = connectStringNode.InnerText;

dsDefinition.ImpersonateUserSpecified = true;

dsDefinition.Prompt = null;

dsDefinition.WindowsCredentials = true;

dsDefinition.CredentialRetrieval = CredentialRetrievalEnum.Integrated;

dSource.Name = rptDataSourceNameNode.InnerText;

string parentDataSourceLibrary = “http://mossdev/DemoDCL”;

string fullDSUrl = string.Concat(parentDataSourceLibrary,”/”, fileName);

rs06.CreateDataSource(fileName, parentDataSourceLibrary, true, dsDefinition, null);

[/CSharp]

As you can tell, in the receiver class I am loading the .rds file into an XMLDocument object which I can then traverse to programmatically create the equivalent .rsds data source. Please be noted that for simplicity sake, I am just converting a simple data source (look st the source code), but be my guest if you’d like to deserialize .rds data source into a full-blown business object to cover more complex data source types. I am just trying to keep the POC focused on the topic and simple!

Quite frankly, I don’t care about setting data sources properly during deployment. You can just create dummy data sources, reference those in your reports and when everything gets deployed, just go to the Data Connection Library and fix up the data sources. As part of the integration, you have the ability to set data source properties right from SharePoint UI. Plus , you should always be using shared data sources as much as possible so supposedly there shouldn’t be crazy number of data sources in your deployment.

I also hardcoded the parentDataSourceLibrary path that points to a Data Connection Library. You should either programmatically create the Report and Data Connection library in your feature receivers or use the same ( or another) feature to create these doc libs using CAML. I assumed that lists are already there. what a lazy a$$ I am 😉

[CSharp]

Warning[] warnings = null;

foreach (string rptPath in reportPaths)

{

fileName = Path.GetFileName(rptPath);

stream = File.OpenRead(rptPath);

Byte[] definition = new Byte[stream.Length];

stream.Read(definition, 0, (int)stream.Length);

stream.Close();

string parentReportLibrary = “http://mossdev/DemoReports”;

string fullReportURL = string.Concat(parentReportLibrary, “/”, fileName);

CatalogItem item = rs06.CreateReport(fileName, parentReportLibrary, true, definition, null, out warnings);

foreach (Warning warning in warnings)

{

//Proceed with logging the warnings

}

DataSource[] catalogItemDtSrcs = rs06.GetItemDataSources(fullReportURL);

DataSourceReference reference = new DataSourceReference();

DataSource dsNew = new DataSource();

reference.Reference = fullDSUrl;

dsNew = catalogItemDtSrcs[0];

dsNew.Item = reference;

rs06.SetItemDataSources(fullReportURL, catalogItemDtSrcs);

}

[/CSharp]

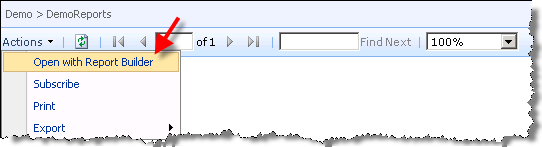

At the end we just publish the reports again to a hardcoded Report library named “DemoReports ” and fix up the wrong data source references. That’s basically it. just Build & Deploy the project. Upon successful deploy , you should see a Web scoped feature that when gets activated , injects the data source into a Data Connection Library called DemoDCL and the report to a Report library called DemoReports.

*The source code for the sample we did in this blog post can be downloaded here.

*If I get a chance next Weekend , I will craft up a PowerShell script which does pretty much the same thing but without utilizing the feature framework. I will keep you posted here!

1/2 a great rest of your weekend 😉